Perspectives

Is AI Intelligent? We're Asking the Wrong Question.

Mar 7, 2025

5 min read

The debate around artificial intelligence often centers on a fascinating but ultimately distracting question: "Is AI truly intelligent?" While philosophers, technologists, and researchers grapple with definitions of consciousness and intelligence, perhaps we're missing a more practical and immediate consideration – is AI useful?

The Intelligence Debate: A Philosophical Quagmire

The question of machine intelligence leads us down complex philosophical paths that have no clear endpoints. What constitutes intelligence? Can machines truly think, or are they merely sophisticated pattern-matching systems? Do they possess consciousness, or are they elaborate simulations of understanding? These questions have occupied great minds from Alan Turing to contemporary philosophers like David Chalmers and Nick Bostrom, yet they remain fundamentally unresolvable with our current understanding of consciousness and intelligence.

The challenge becomes even more apparent when we consider that even human intelligence defies simple definition. Psychologists have proposed multiple theories of intelligence, from Howard Gardner's theory of multiple intelligences to Robert Sternberg's triarchic model, yet none capture the full complexity of human cognition. We struggle with fundamental questions about our own minds: Is intelligence a single general factor or a collection of distinct capabilities? How do we account for creativity, emotional intelligence, or practical wisdom? If we cannot definitively measure and understand intelligence in ourselves – beings we know possess it – how can we hope to assess it definitively in machines?

This philosophical puzzle, while intellectually stimulating and worthy of academic pursuit, may be holding us back from more productive discussions about AI's role in society. We risk becoming so entangled in abstract questions that we lose sight of concrete opportunities and challenges.

Shifting the Conversation to Utility

Instead of debating whether ChatGPT "understands" language in the same way humans do, or whether deep learning models truly "think" about the problems they solve, we should focus on what these tools can actually accomplish in the real world.

Consider the medical field, where AI systems are already demonstrating remarkable utility. Radiologists are using machine learning algorithms to detect subtle patterns in medical imaging that might escape human notice, leading to earlier cancer diagnoses and better patient outcomes. These systems don't need to experience the satisfaction of helping a patient or feel empathy for human suffering – they simply need to identify pathological changes with high accuracy and reliability.

In engineering and design, AI tools are enabling professionals to explore vast solution spaces that would be impossible to navigate manually. Structural engineers use AI to optimize building designs for both safety and sustainability, while aerospace engineers employ machine learning to improve fuel efficiency in aircraft design. The question isn't whether these systems understand the principles of physics or materials science in a human-like way, but whether they can reliably generate designs that meet performance criteria.

The same principle applies across numerous domains. Financial institutions use AI to detect fraudulent transactions not because the algorithms experience moral outrage at deception, but because they can identify suspicious patterns with speed and accuracy that human analysts cannot match. Climate researchers employ machine learning to process massive datasets and identify trends that inform our understanding of environmental change. Software developers use AI coding assistants not because these tools appreciate elegant code, but because they can suggest solutions and catch errors that improve productivity and code quality.

The Pragmatic Approach: Learning from History

The history of technological advancement offers valuable precedent for this utility-focused perspective. When mechanical calculators first emerged in the 17th century, and later when electronic computers revolutionized computation in the 20th century, society didn't spend decades debating whether these machines were "truly doing math" in a conscious, understanding way. Instead, we focused on their practical value: Could they perform calculations accurately? Could they solve problems that were previously intractable? Could they enhance human capabilities in meaningful ways?

The same pragmatic approach guided our adoption of other transformative technologies. We didn't need to understand whether automobiles "wanted" to move or whether telephones "understood" human speech – we evaluated them based on their ability to transport people and transmit information effectively. This utilitarian framework allowed us to rapidly develop and deploy these technologies, iterating and improving them based on real-world performance rather than abstract philosophical criteria.

This isn't to dismiss philosophical inquiry entirely. Questions about the nature of intelligence, consciousness, and machine cognition are important for our broader understanding of mind and reality. They deserve serious academic attention and may ultimately inform how we design and interact with AI systems. However, in terms of developing and deploying AI technology in the near term, utility should be our north star.

Measuring What Matters: A Framework for Evaluation

By focusing on utility, we can develop more meaningful and actionable metrics for evaluating AI systems. Rather than attempting to measure abstract properties like "understanding" or "consciousness," we can assess concrete capabilities and outcomes.

Task completion accuracy provides a straightforward measure of an AI system's effectiveness in its intended domain. For a diagnostic AI, this might mean the percentage of correct identifications in medical imaging. For a language model, it could involve accuracy in translation tasks or the relevance of generated responses. These metrics are quantifiable, comparable across systems, and directly related to practical value.

Real-world problem-solving capabilities offer another crucial dimension for evaluation. This goes beyond narrow task performance to consider how well AI systems handle the complexity, ambiguity, and variability of actual applications. A customer service chatbot might perform well on standardized test queries but struggle with unusual requests or emotional customers. A autonomous vehicle might excel in clear weather on well-marked roads but falter in construction zones or severe weather conditions.

Economic and social impact metrics provide broader perspective on AI utility. Does the system reduce costs, increase productivity, or enable new capabilities that weren't previously possible? Are there measurable improvements in quality of life, health outcomes, or educational achievement? These indicators connect AI performance to tangible benefits for individuals and society.

Safety and reliability considerations are equally important, particularly as AI systems are deployed in high-stakes environments. An AI system that performs brilliantly most of the time but occasionally fails catastrophically may be less useful than one with modest but consistent performance. This is especially critical in domains like healthcare, transportation, and financial services where errors can have serious consequences.

Finally, accessibility and ease of use determine whether AI systems can deliver their intended benefits in practice. The most sophisticated AI algorithm is useless if it requires extensive technical expertise to operate or if it's prohibitively expensive for most potential users. Utility must include consideration of how effectively AI capabilities can be deployed and adopted by their intended users.

The Path Forward: Implications for Development and Deployment

This shift toward utility-focused thinking has profound implications for how we approach AI development, regulation, and integration into society.

Development Focus: Rather than attempting to replicate human intelligence in all its complexity, we can focus on creating specialized tools that complement and enhance human capabilities in specific domains. This approach recognizes that different types of intelligence may be optimal for different tasks. An AI system designed for financial analysis doesn't need to appreciate poetry or understand social nuances – it needs to process numerical data accurately and identify meaningful patterns. By abandoning the goal of general human-like intelligence, we can create more effective and efficient systems.

Ethical Considerations: The utility framework also provides clearer guidance for ethical decision-making around AI. Rather than grappling with abstract questions about whether AI systems deserve moral consideration based on their potential consciousness, we can focus on ensuring these tools serve human needs safely and ethically. This includes considerations of fairness, transparency, accountability, and the distribution of benefits and risks. The ethical question becomes not whether AI has rights, but whether our use of AI respects human rights and promotes human flourishing.

Resource Allocation: From a research and development perspective, the utility focus suggests directing efforts toward improving AI's practical capabilities rather than pursuing abstract notions of machine consciousness. This means investing in robustness, reliability, interpretability, and domain-specific performance rather than chasing benchmarks that may not correlate with real-world value.

Conclusion: Embracing Practical Intelligence

The question isn't whether AI is intelligent in some abstract, philosophical sense – it's whether AI systems can reliably and safely perform tasks that benefit humanity. By reframing our conversation around utility rather than intelligence, we can move past philosophical deadlocks and focus on developing AI systems that genuinely enhance human capabilities and solve real problems.

This pragmatic approach doesn't diminish the significance of AI development – if anything, it elevates it by connecting technological progress to concrete human needs. A diagnostic AI that helps doctors save lives, a climate modeling system that informs environmental policy, or a educational tool that personalizes learning for students with different needs – these represent profound achievements regardless of whether the underlying systems experience consciousness or understanding in a human-like way.

As we continue to advance AI technology, maintaining this focus on utility will help ensure that our efforts translate into meaningful benefits for society. The philosophical debates about machine consciousness and intelligence can continue in parallel, enriching our understanding of mind and intelligence more broadly. But they shouldn't distract us from the practical work of making AI truly useful – creating tools that solve real problems, enhance human capabilities, and contribute positively to the world we share.

The measure of AI's success shouldn't be whether it thinks like us, but whether it helps us think better, work more effectively, and live more fulfilling lives. In the end, that's a far more important question than whether machines can truly be called intelligent.

More articles

Newsroom

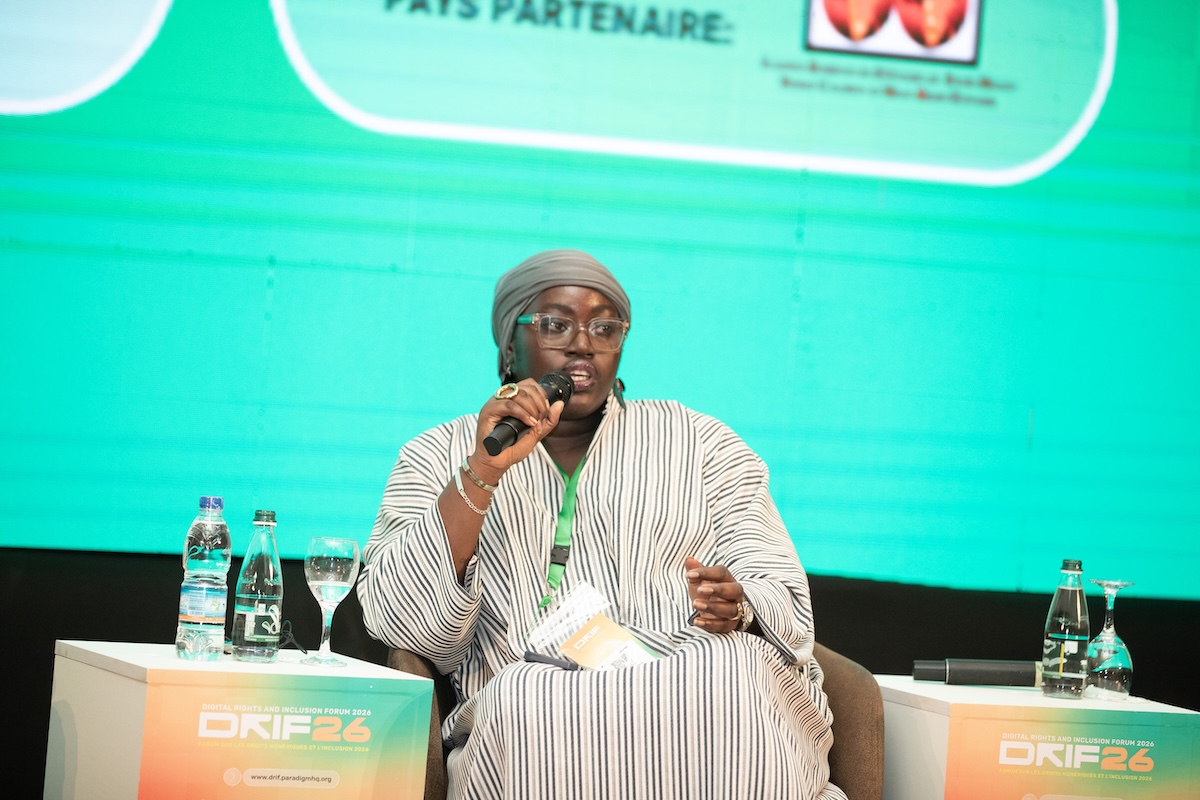

From Minister of Information to the UN: Aicha Thiendella Fall’s Journey into Global Security

Newsroom

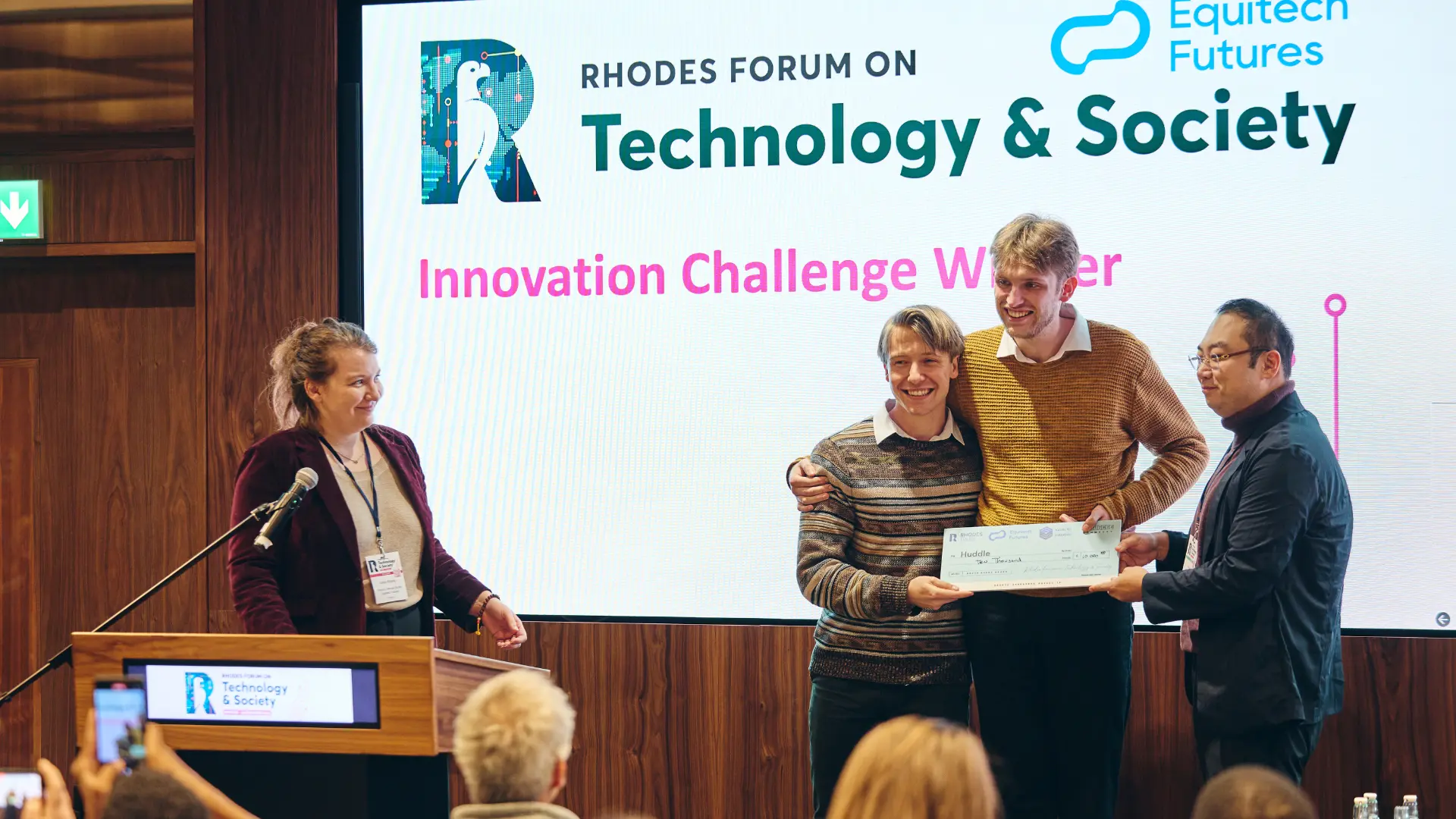

Otieno Collins Junior Reflects on His Journey from Capstone Project to Prize-winning Startup

.webp)

Newsroom

No Innovator Left Behind: How Equitech Futures uses philanthropic capital to maximize impact

Newsroom

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)